Total Skill Collapse Is How AI Makes Idiocracy a Reality

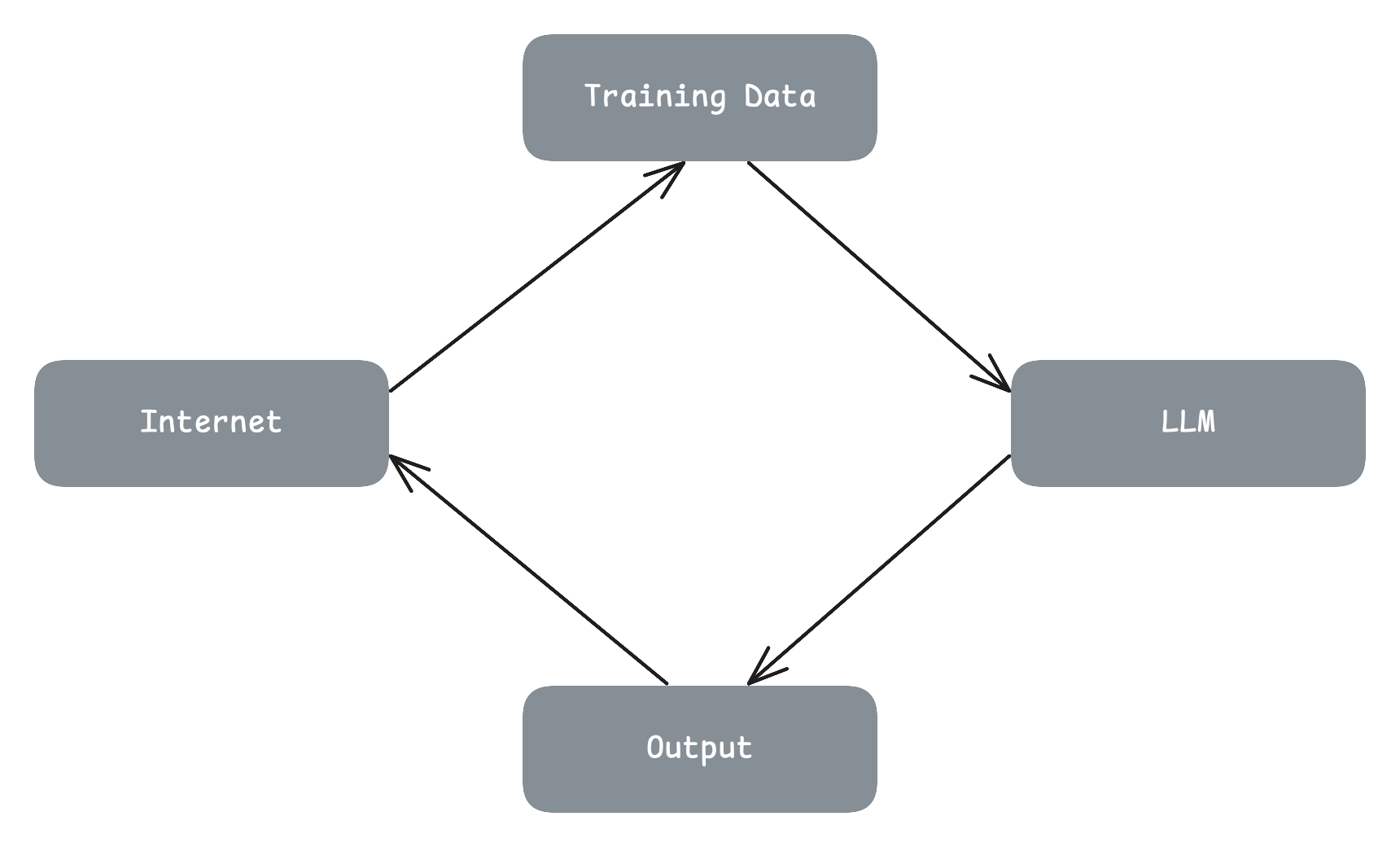

A lot has been written about model collapse, the process where Large Language Models (LLMs) recursively degrade as they train on their own outputs. This outcome seems obvious and inevitable when we consider LLMs in the context of a broader system over time. We know AI generated output is commonly created to be shared on the internet, and thus will be eventually incorporated into subsequent models. We can think of this system as Internet => Training Data => LLM => Output => Internet

But what if we expand the scope of this system further? How did the original training data arrive on the internet in the first place? Why was it created and shared? By whom and for what purpose?

The Economics of Attention

By some estimates, the internet contributes $4.9 trillion dollars of economic activity. From content to code, there's a plethora of ways to monetize human endeavor and creativity.

It's a safe assumption then, that much of the content on the internet was directly or indirectly created because it made someone money. Reddit, Facebook, Twitter, etc provided services that encouraged users to contribute content so they could monetize attention by selling it to advertisers. Services like YouTube encouraged creators directly by financially rewarding them through monetization, a necessity given the high cost of video production and deep expertise required to create high quality long-form content.

Meanwhile in software, GitHub supported open source developers by providing a platform for supporters to subscribe with regular monthly donations. Companies often paid open source project maintainers to work full time on their open source projects because it commoditized their compliments, or because it helped them recruit top software engineering talent in a supply-favorable market. The most well known example being the javascript framework wars of the 2010’s between Facebook Meta (React) and Google (Angular).

These online creators are not exclusively amateurs and hobbyists. $4.9 trillion dollars of economic activity tends to both attract and develop experts in various fields. Visual artists that have developed their skills over decades long careers, writers that have honed their craft with the support and feedback of incredibly experienced and talented editors, software engineers — some of whom created the very infrastructure powering this online economy — likewise developed incredibly deep expertise over decades long careers, plus many more craftspeople of varied disciplines. It's impossible to fathom the billions of hours of human effort behind this $4.9 trillion dollars of economic activity.

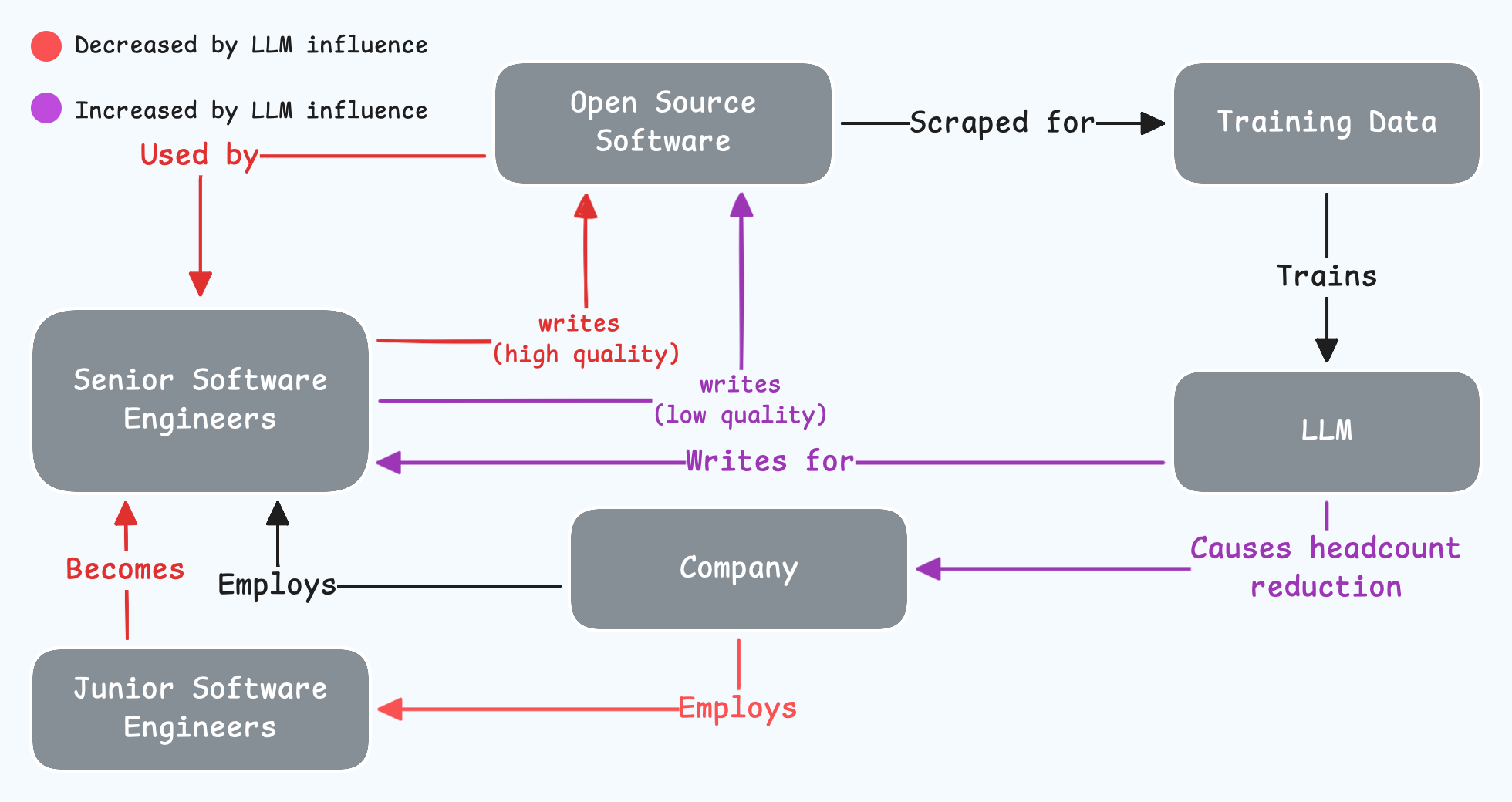

How will generative AI impact these online economies? How will it change the incentives of the various actors in these systems? These actors being the creators, platforms (Facebook, YouTube, Google, etc), generative AI providers, and audience/users. We can already see some of these changes with the proliferation of slop content, and the reduction of both entry level and senior positions in related industries — companies no longer hiring junior software engineers robs the future of open source contributors writing code for LLMs to train on.

Software engineering is a large industry with many supporting services such as recruiters, organizational researchers, etc. This means we have access to a lot more data about hiring trends, productivity changes, etc, which we can cautiously extrapolate to less heavily scrutinized industries. We'll come back to software engineering later in the article. First, let's explore some of the aforementioned actors in the attention economy.

A Conflict of Incentives: The Attention Economy in the Age of AI

When people pick up their phones to use social media platforms, they have some expectations about what they'll see and how it will make them feel. These expectations might be unconscious, but they exist. One of the reasons these platforms are so addictive is that the reward of using them is unpredictable. Will we see a funny video? Something that motivates us? Something that makes us angry? Something a bit boring? Or maybe something so incredible we want to share it with a friend? This unpredictability and variable reward creates an addictive habit loop that keeps us coming back.

Before generative AI, incentives were broadly aligned across creators, platforms, and users. Creators were rewarded for creating good content, platforms were rewarded with attention for serving entertaining content, and users paid for entertainment with the minutes of their lives and conscious awareness. While users definitely got the short end of the stick in this deal, it was, on the whole, sustainable for all parties.

What happens when AI generated content starts flooding these platforms? The ratio of engaging content to boring content goes out of whack. AI generated content is easy to create, making low quality content profitable by lowering the breakeven point of invested effort.

A well made video from a YouTube channel with at least one hundred thousand subscribers might cost ~$5,000 to produce “by hand“. Very high quality videos, such as those created by Veritasium, can cost tens of thousands of dollars, or more. A slop-prompter (let's not call them creators) might only pay $5 to $50 to generate a knockoff video in the style of Kurzgesagt. We've changed the unit economics of entire industries by 3 to 4 orders of magnitude without considering second and third-order effects nor the medium to long-term impact.

These ridiculous, and heavily subsidized, AI-era unit economics leads to AI-generated content slopifiers inundating platforms with low quality content, subsequently disincentivizing human creators from investing the requisite effort to produce high quality content. At the same time, this onslaught of slop makes finding high quality content difficult for audiences.

The attention economy has been irrevocably changed from a quality game to a quantity game. It's also become a zero-sum game — slop-slingers need the high quality content they are inadvertently diluting to bring people to the platforms serving their slop, but the sloppist that stops slopping s-loses. The monthly recurring user base built by the creators and the platforms have become the commons tragically and inevitably destroyed by these slopholes.

Platforms: Fiefdoms of Technofeudal Delusion

Platforms need to protect creators of high quality content, but they're in an arms race with AI-wielding creators and the generative AI providers themselves. This arms race means platforms cannot easily disincentivize AI-generated content without undermining incentives for human creators. In some cases they're internally conflicted, because they are a generative AI provider and a social media platform — e.g. YouTube and Google being the same corporation, or Meta being a dumpster fire.

Then there's the fact that users people don't actually enjoy AI generated content as much nor for as long. In a recent survey conducted by writing platform Ellipsus, 97.5% of 4,449 of respondents stated that they actively avoid content they suspect is AI generated. Only 0.1%, six respondents out of 4,449, stated that it was unimportant to them whether or not a piece of writing was authored by a human. There is no organic demand for AI generated content, instead, generated content acts as, at best, a kind of imperfect substitute.

In fact I think its more accurate to categorize AI generated content as a type of forgery. AI-plagiarists rely on people being unable to identify their content as AI generated. A video of a kid playing an incredible drum solo is entertaining if it's genuine, but infuriating if it's AI generated. Sharing the former can be a platonic love language between friends, but sharing the latter is just embarrassing. Regardless, the person that prompted and uploaded the generated video is rewarded for tricking some people, and pissing off others. If AI generated content’s value is predicated on not being identified as AI generated but passing as human created, then what else can we call it except a forgery?

This threat of being slopboozled on social media means the presence and prevalence of AI slop forces people to invest effort discerning between AI generated forgery and genuine human endeavour, or give up and in to the avalanche of slop. This is a problem for Platforms because they need doomscrolling to be a passive activity, but AI slop is turning doomscrolling into a frustrating and mentally taxing pastime.

Crucially, this is a problem for human creators, especially up and coming creators. The risk of being slopboozled plus the mental exhaustion of continual discernment against AI content means the potential audience of new creators are less likely to discover them. In some cases, a creator and potential fan miss out on each other because the latter incorrectly writes off a new creator’s hard work as AI-generated; an incredibly frustrating experience for the creator, I can assure you.

At a high level, not only are the incentives for creating high quality content being reduced, but also the incentives – the hope of future reward – for developing the necessary skills to create high quality content. This means creators are less likely to take creative risks and try something new or a bit different. And even if they wanted to, they might never develop the skills required to pull it off since they never went full time and instead became a carpenter.

So while model collapse causes models to regress towards the mean, losing the edges — the diversity in their data — generative AI also causes the same regression in human creativity by perverting the incentives and destroying the economics that supported the development of the talented experts who produce the content gen-AI needs for continued training. As a result, model collapse accelerates, and is simultaneously accelerated by, the de-skilling of the human population. This is the kind of self reinforcing feedback loop that causes cascading catastrophic collapse in ecological systems, financial systems, businesses, logistics warehouses, and any other similar system. This simultaneous collapse of both synthetic and human skill is a process I call Total Skill Collapse.

AI Makes You Stupid, Ya Goose!

I've been a software engineer for roughly 15 years. I don't want to brag, but I might be a bit good at it. That tends to happen when you've suffered an unhealthy obsession with something for over a decade. Not only was I not impressed by AI coding, I was surprised by how impressed other engineers were. As I watched people in my field respond to AI, I noticed something I'm a little ashamed to speak aloud: the better someone was at coding, the less impressed they were, and conversely… the worse someone was at coding, the more they were impressed by AI.

I hate this line of thinking, but it's crucially important to link this concept with another uncovered by researchers: using AI makes people overestimate their performance. Now think about what happens when the people who have the furthest to go developing their skills, start using a tool that makes them wildly overestimate their performance.

Let's go back to incentives again for a moment. AI labs are not incentivized to make tools to help you do good work. They're financially incentivized to provide AI tools that make you feel good when you use them to do your work. That's a distinction that's hard to notice when you're using the tool, but it's incredibly fucking important! It has an enormous impact on the outcomes.

Think about it. Their target metrics aren't going to be the average code quality of every open source repository, the amount of new revenue your company generated by having AI summarize emails written (with AI assistance) to the CEO, or the average number of friends their users had engaging conversations with this week. No, their target metrics are going to be average time spent chatting, thirty day retention, etc. Those metrics don't require you to do good work, they require you to feel good using AI. Those metrics don't care if you get AI psychosis, become terrible at your job, or gaslight your spouse.

So if AI labs are incentivized to create a tool that makes you feel good using it, how do they do that? The answer is with a technique called Reinforcement Learning With Human Feedback (RLHF) where they use human feedback to make AIs as addictive as possible. Training AI to give us responses that we like the most, that make us feel the best they could possibly make us feel, is only ever going to lead us towards one outcome: sycophancy.

Imagine you're talking to the smartest person you think you've ever spoken to, who seems to know everything about every topic, and they think your ideas are fantastic. How do you think that would make you feel? How would some of the people in your life feel? The silliest person you know is being told “you're absolutely right!” by AI on a regular basis.

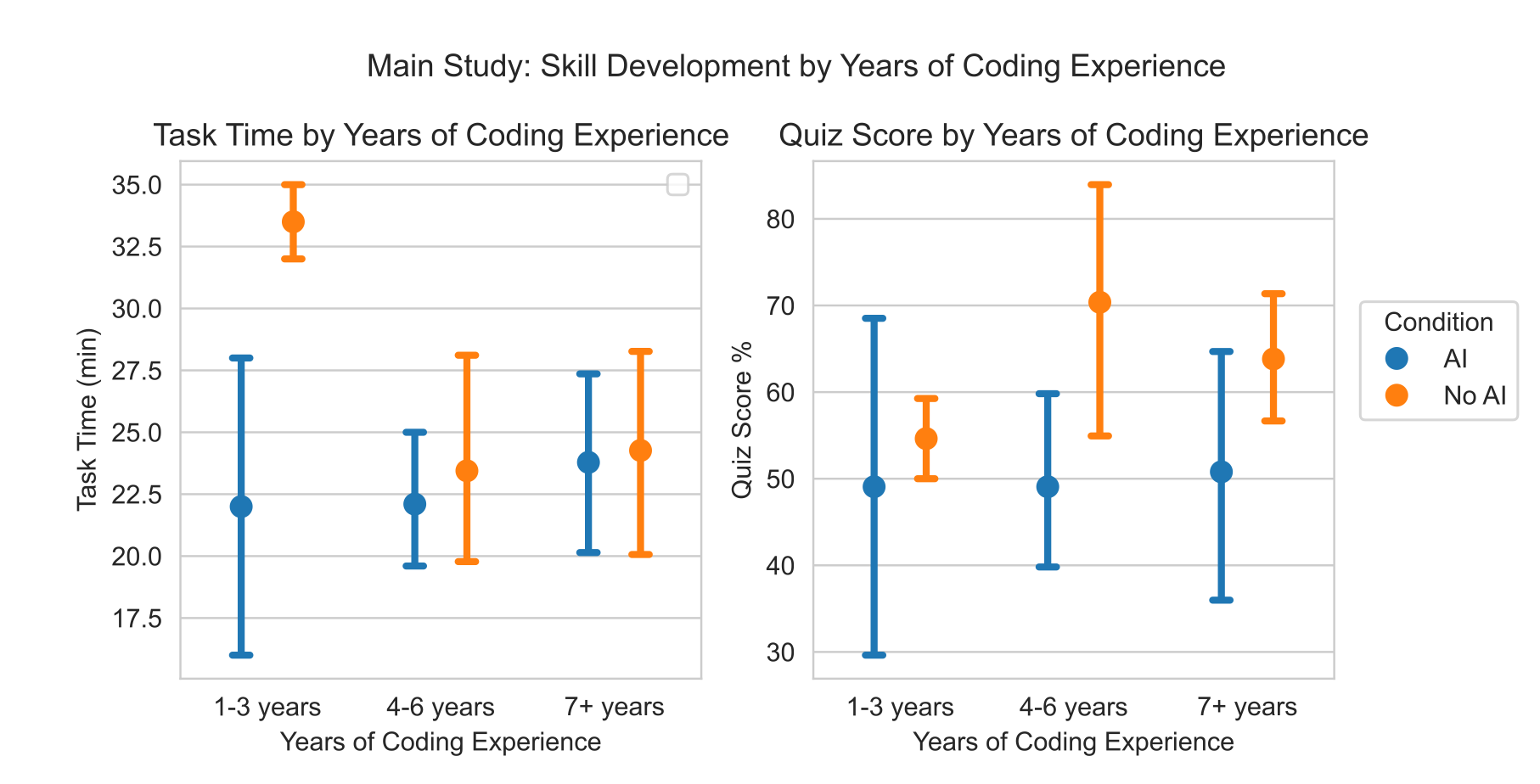

This isn't theoretical, it's not just deductive reasoning, we can see this in the data. In a study on How AI Impacts Skill Formation, they had software engineers complete a task and then take a quiz afterwards. Here are the results:

In these results, AI assistance does not meaningfully improve the productivity of experienced engineers. But when it comes to how much the engineers learned after the task, AI’s stupefying effects do not discriminate on experience, at all. Everyone that used AI came out of that task stupider than the people that did it themselves.

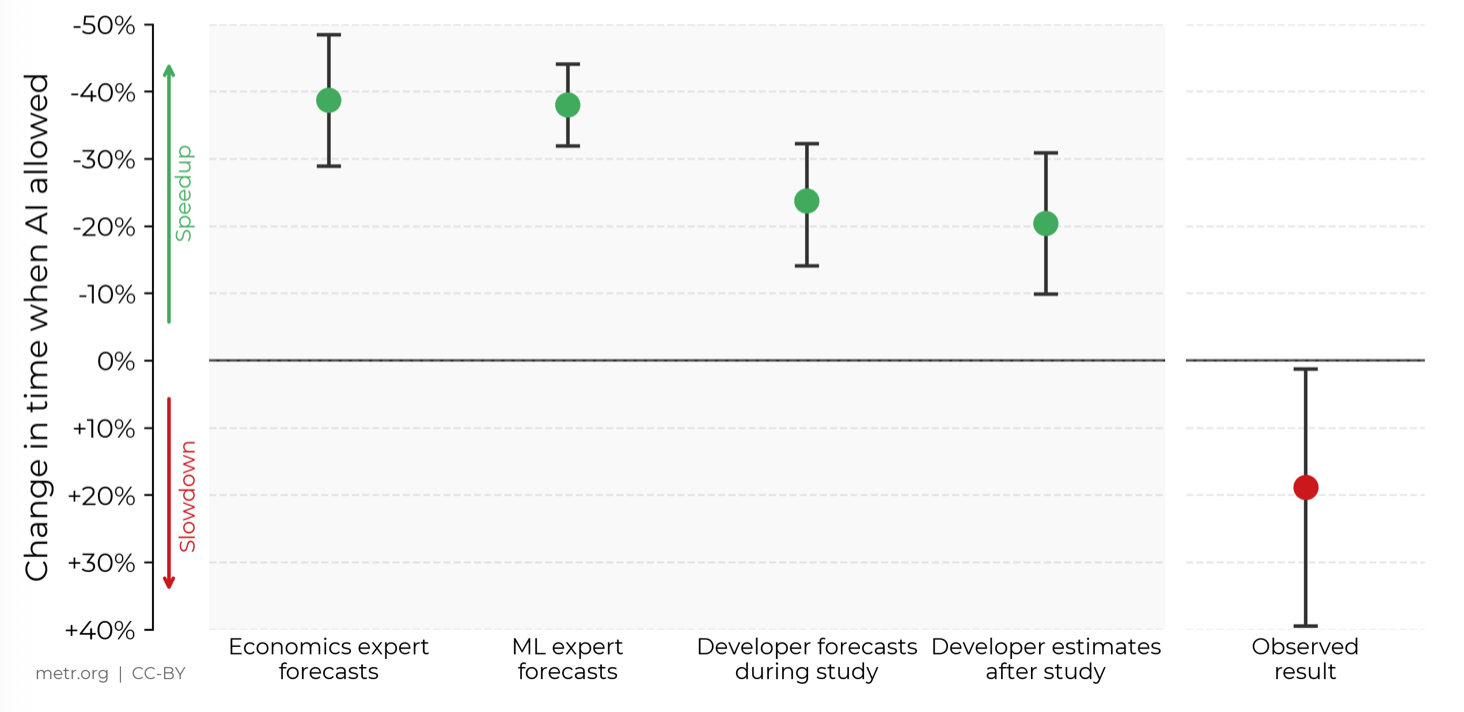

In another study, software engineers predicted that using AI would help them complete tasks 24% faster, then after they completed the tasks they estimated that it only made them 20% faster, but in actuality they performed 19% slower than those not using AI. Take a look at this insanity:

As a software engineer I'll tell you a secret — we estimate how productive we've been by thinking about how good we felt while we were coding! Everything else we say is just rationalizing a feeling.

Unfortunately, getting good at something doesn't feel good. You're constantly confronting your inadequacies. You're pushing against the edges of your capabilities. It hurts, it's uncomfortable, it takes a lot of effort and a lot of time. I've heard personal growth described as a painful awareness of the gap between your current capability and your taste, and damn that is painfully accurate. I would add, however, that it's a pain you never want to free yourself of. If your capability ever catches up to your taste, your judgment, your ability to discern good from bad, then you've reached a plateau. Feeling superior about your abilities is dangerous. It's literally impossible to get better at something with only positive feedback. Ironically, RLHF means that AI is getting both positive and negative feedback when humans aren't.

Now I want to reiterate a previous point: AI labs prioritise making you feel good about your work above making you good at your work. Really, why would they want you to be good at your work at all? Then you might not need or want their services.

I've got bad news for people like me who actively avoid using AI. I suspect you don't have to use AI for it to make you stupider. That's right, big tobacco gave us passive smoking and now Sam Altman may have given us second-hand brainrot.

Remember what I said about the gap between your capabilities and your taste? Whether you're a writer, visual artist, software engineer, or musician, how did you develop your taste? How do you continue developing it? By seeking out great works — great art — that inspires you. By finding other people's creations that push the boundaries of what you thought possible, or perhaps creatively breaks a rule you thought was immutable.

One of the things I've learned in my long career as a software engineer is that you can't separate the people from the system. They're not just an input nor an influence, people are an integral part the system. In software engineering, teams of developers change the code base to change the behaviour of the system, but the codebase also changes the behaviour of the team. You can change how a team behaves by changing the structure of the code.

The same principle applies to us as creative human beings making art or performing our chosen craft. We can't separate ourselves from the existing work that's out there. Not only will AI work crowd out the great works, potentially robbing us of inspiration, but the AI generated works that we fail to identify and filter out may subtly and subconsciously lower our standards. The AI slop is going to affect our behaviour, even if it is just making us hesitate and question whether using that em-dash is worth risking our hard work being dismissed as AI generated. We’re robbing future generations of fresh shoulders to stand on.

Connecting this back to Total Skill Collapse, in previous sections we saw how AI erodes the economic incentives that attract, develop, and maintain expertise. Now we've also considered how AI can actually erode existing expertise, even amongst experts that are actively avoiding using AI. I've got to admit, before I started writing this article, I hadn't given the second point as much consideration. The negative effects of generative AI on the aggregate capability of human populations might be grossly underestimated. With the negative effect on human skills accelerating model collapse, and model collapse accelerating human skill collapse, total skill collapse might happen faster than we imagine.

A Just Tragedy Of Profound Stupidity Awaits AI Labs

You might be thinking “but surely the model providers would stop training new models before things really collapsed?“ and it's a reasonable question. However, it's unlikely for two reasons: Profit, and hubris. In the case of disciplines like software engineering there's a third reason — without industry experts to judge the output of LLMs, how will they know when to stop?

Unprecedented amounts of debt and venture capital have gone into generative AI. Over a trillion dollars. So far, generative AI has yet to produce even $100 billion of revenue. As of 9th March, 2026 Anthropic has made less than $6 billion in revenue over its entire lifetime. REVENUE! Every AI lab — Anthropic, ChatGPT, Google, xAI — survives only by edging investors with headline-grabbing and utterly misleading claims insinuating they're about to “achieve AGI“ or “end software engineering“. They also need to show revenue growth. Doesn't matter if it's sustainable, doesn't matter whether or not it's proportional to the funds raised, all that matters is number go up.

Does it matter that number go’ed up because someone just paid $3 to produce some knock-off slop that will wind up in the training data while discouraging the human creators whose content they need to keep subsequent models relevant and fresh? Nope. Only number go up! Another company used AI as an excuse to lay off software engineering teams maintaining open source software? nUmBeR Go UP! Disney laid off a whole team of artists? YES! nUmBER gO uP!! But didn't you train on Dis—STFU, NUMBER GO UP!

Regardless of how much AI Labs need "training" to sound like a finite process that will stop one day, the fact is that they cannot stop training, ever. They can't risk falling behind their competitors. They can't admit to investors that they aren't going to achieve AGI. All of this is based on a delusional notion that technology will always get better, forever. A willful denial of process limits, physical constraints, and other ways of describing actual fucking reality.

The utterly deluded, probably AI-psychotic CEOs & founders of these companies are not going to suddenly wake up one day, slap their foreheads, and say “oh, we've got to stop AI before humans stop creating, thus destroying our business!” No, they're going to extract as much money as they can before the music stops.

At the very least, we have to acknowledge that vast & unprecedented sums of money are being gleefully burned building infrastructure dedicated to producing – at great expense and negative margins – content nobody wants, nor asked for.

For Once, I Don't Have Many Ideas…

Usually I can look at a system, spot the leverage point where you can exert the most influence, and figure out how to change the incentives and behaviours of actors or subsystems. In this case, I'm somewhat at a loss, because the very resource AI companies feed on to create their products is human endeavor. We can't very well stop creating, nor can we stop consuming. Stopping either would only accelerate our chaotic journey towards the outcome to which gen-AI sent us hurtling. Slop would fill the void, audiences would give up, and talentless generations would grow up robbed of the opportunity to know any better.

The only idea I have is going to take years, probably decades. If the economic system we have is always going to create these incentives that lead to these vulturous AI-labs, gullible venture capitalists, and idiotic business leaders, then we need a new economic paradigm. I know it's trite, but you are reading this article on this website, and I did manage to bite my tongue for a couple thousand words.

In a democratic economy, limitations on profits disincentivize irrationally chasing infinite growth and instead incentivize fulfilling, useful work. Within democratic companies, shared ownership, shared risk, and shared accountability renders impotent the charismatic business idiot imbued with an infinite supply of unearned confidence. With a larger number of smaller companies, state capture and societal destruction by Bond villain-esque corporations becomes difficult and improbable. When a business strategy will incur outrageous negative externalities, members (employee-owners) can vote it down and demand an ethical alternative strategy. When new businesses, looking for funding, raise capital from their financially saturated democratic suppliers and clients rather than venture capital firms that only further enrich the obscenely wealthy, demand for infinite growth number-go-up software businesses grifts will naturally evaporate.

We can't put the genie back in the bottle; generative AI can't be uninvented. But that doesn't mean we have to accept the course we are on. It might still exist, but it doesn't have to be as prominent, and slop doesn't have to be as prevalent. I think most importantly, AI doesn't have to be centrally owned and controlled by destructive for-profit companies seeking to commoditize human creativity. To have a chance in hell of successfully regulating AI and updating copyright law, we need an economic system where businesses naturally don't grow so large they can capture governments.

When the AI bubble inevitably bursts, and it will burst, we will have a once in a lifetime opportunity to stand up a new economic paradigm in place of the technofeudal system Silicon Valley has been building and inflicting on the world since the 2008 global financial crisis. So let's keep creating, keep honing our talents, get real fucking good, and when the technofeudalists inevitably fuck it up we will be ready, not to seize the means of production, but the whole damned economy.

The world doesn't have to be run by assholes, let's try running it democratically.